Artificial Intelligence (AI) seems to be everywhere. A quick Google News search for “Artificial Intelligence” returns over 89 million articles on everything from how AI is taking over from humans, to how it is helping cure cancer. If you are reading this article then you’re probably interested in remote sensing, satellite imagery and the broader space and geospatial sector. You are probably also wondering how AI can it be applied to remote sensing and more specifically, feature extraction.

What is AI?

Artificial Intelligence is not a new concept. There are numerous science fiction stories dating back to the 19th century which deal with thinking machines, and many people since then have pondered the possibility of a machine that can think for itself. In 1950, Alan Turing published a paper suggesting that computers should be able to be made to reason in the same way humans do. In 1956, this concept became a reality with Logic Theorist, a program written to mimic the problem-solving skills of a human. Since then, the field of AI research has been slowly developing and improving, with some notable achievements such as when Deep Blue defeated the then world chess champion Garry Kasparov in 1997. If you are interested, The University of Harvard has a short article on the history of AI in more detail.

AI is a general term applied to a computer-based system that possesses some human-like intelligence. Machine Learning is also a term that is frequently mentioned alongside AI. Machine Learning is an approach applied in the development of AI, it began in the 1980s and has been used widely across a range of industries. Bayesian Pixel Classification, a technique widely implemented in remote sensing software, is an example of basic Machine Learning. Deep Learning is another, more recent development in applied Artificial Intelligence. Deep Learning uses advances in computer technology to train deep neural networks (including convolutional networks) in a way similar to how a biological brain functions. While Machine Learning certainly has its uses, the development of Deep Learning over the last 10-15 years has made many of the AI systems of today possible; including the remote sensing techniques that Geospatial Intelligence Pty Ltd (GI) uses to deliver solutions for our clients. In all its forms, AI has proved to be a useful tool when used to efficiently derive information from data.

Even though AI has been around since the 50s, it has only come to prominence more recently. The reason for this is complicated, but can be broadly broken into a number of parts. First, advances in computer hardware technology, in particular the use of Graphical Processing Units (GPUs), have made the massive processing required by Deep Learning possible, which has allowed the advancement of AI research. Second, the continued development of Deep Learning has made solving new problems with AI possible; for example, Deep Learning and hardware advancements have facilitated fast and accurate computer vision, which is used in self-driving cars. Third, the amount of data available globally, including in the geospatial domain, is growing exponentially. According to the International Data Corporation, more than 175 zettabytes (1 zettabyte is a billion terabytes) will be created, captured, copied, and consumed in the world by 2025. This exponential data growth has driven the development of new data analysis tools, including AI.

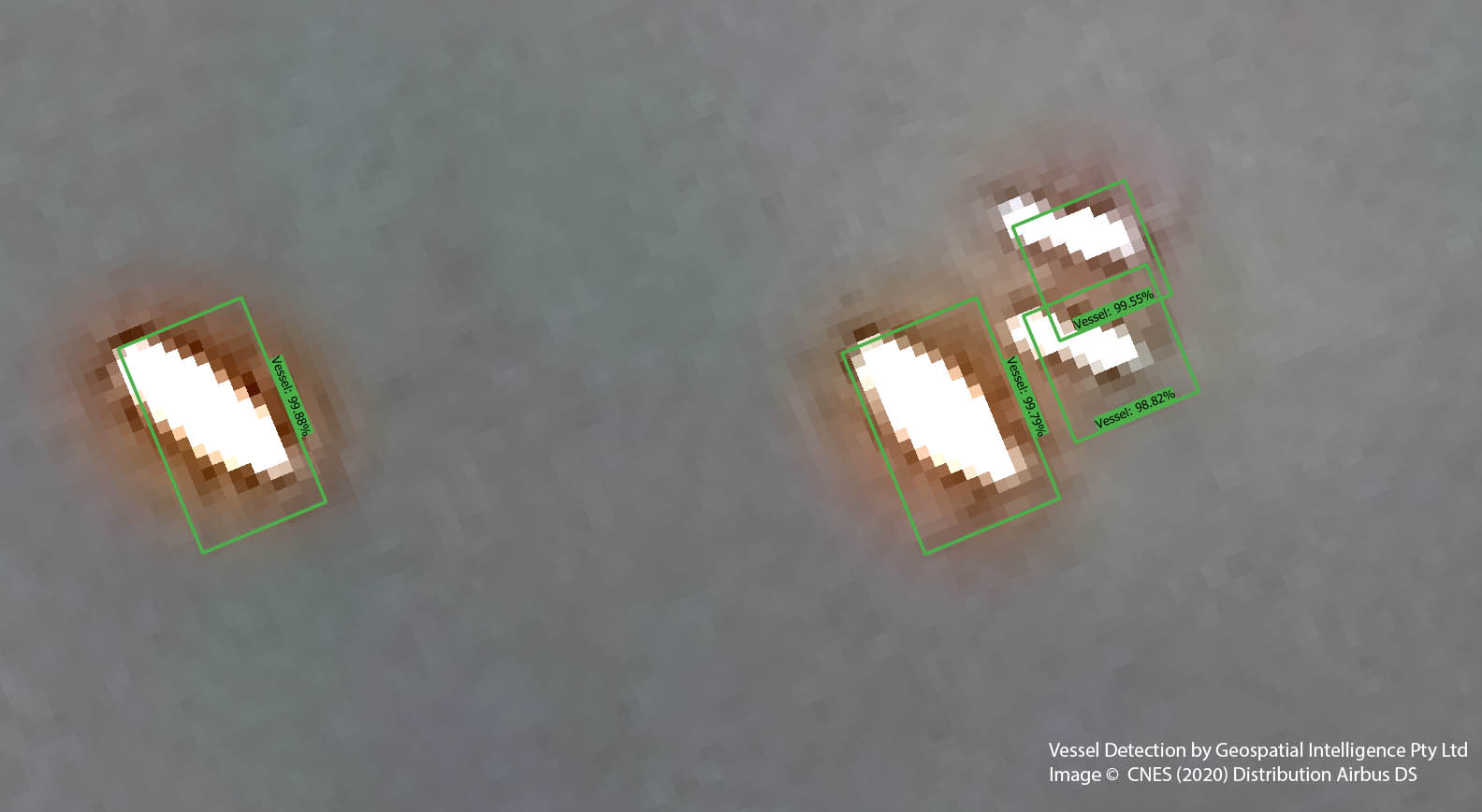

At GI we use the term Machine Intelligence (MI) to group these techniques together when focusing their application towards real world problems. Using MI systems, we are able to fuse disparate data sources and sensor types to produce highly reliable, accurate and valuable information from the growing number of global data sources. We have also found MI technology to be a cost effective and timely blend of techniques when applied to a diverse range of earth observation datasets. For example, GI’s latest Machine Intelligence (MI) vessel detection system can produce detection reports over large, very high-resolution satellite imagery scenes in seconds, while historically this human-based task may have taken hours.

Why AI in remote sensing?

AI in remote sensing is also not a new idea. As far back as 1977, the US Naval Research Laboratory was researching AI applications for remote sensing and throughout the 90s and early 2000s, academic papers were published on the use of a range of different AI techniques in the remote sensing and earth observation fields. The development of deep convolutional neural networks using GPUs for computer vision problems since the early 2000s, and the increasing availability of high-performance computing hardware, rapidly increased the performance and accuracy of the AI models applied to remote sensing tasks. The volume and resolution of satellite imagery has also been increasing substantially, making it apparent that new analysis tools were needed to manage the larger and more complicated datasets used by geospatial professionals.

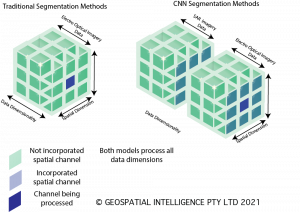

Convolutional Neural Networks (CNN) are a form of Deep Learning algorithm heavily used in computer vision. They are also extremely well-suited to remote sensing in particular, object detection and segmentation tasks. In object detection, the aim is to identify and classify objects and their location within an image, for example finding all the houses in a satellite image. Segmentation is a pixel-based classification of an image, such as identifying every road pixel in an image, making it ideal for feature extraction tasks. The reason that CNNs are so well-suited to these tasks is that they can capture the Spatial, Spectral, and even Temporal dependencies within images and learn patterns. The spatial aspect of CNNs is particularly useful for remote sensing. Where a traditional pixel classification algorithm might use the spectral signature of a single pixel to determine its class, a CNN can use a square of 512x512 pixels (or more) and their related spectral information to determine the class of a single pixel. This means that a CNN can, for example, be trained to recognise linear features such as a road, based not only on a pixel’s spectral signature, but also from its shape and surrounding environment in much the same way a human brain does. In fact, CNNs have some advantages over a human brain. Due to the way computer screens display satellite imagery, a human analyst can only see three colour channels at a time, and then only in roughly 8 bits of data per channel. Most electro-optical satellites capture imagery in four or more channels (red, green, blue, near infrared) and at 12-16 bits per channel. A CNN is able to process all of this information at once, whereas a human analyst would need to switch between channels and adjust the image “stretch” to be able to properly view the data.

Clearly, CNNs have a lot of advantages over the more traditional remote sensing methodologies, but they also have some limitations. A CNN model can be trained to be very good when applied to a specific problem space, for example extracting road networks from very-high resolution imagery, but the model may not be very flexible. Training a model for a new problem space requires specialised skills, hardware, and time. The AI development process is discussed further in the next section.

The AI Process

The AI development process begins with a definition of the problem. The problem definition considers what the output of the system should look like, what inputs are available (different imagery types, elevation models and other data that might be fused together), and the level of accuracy the model needs to achieve. The decisions on each of these helps determine the techniques to be used, the type and amount of training the model will require and the appropriate CNN architecture.

After the problem has been defined and the technical components of the model chosen, the most time-consuming process of AI development begins; the compilation of training data, model training and testing. This is an iterative process and often takes more than 90% of the overall time allocated to a project. Training data is created by first acquiring sample imagery from the problem space. Imagery analysts then create label data. In the case of object detection these labels are normally bounding boxes with classification labels, and in the case of segmentation the label data is a class-based pixel mask. It is this label data that teaches the AI model what to look for, and the overall quality of the training data determines the quality of the final output. The amount of training data required depends on the scope of the project and of the task (a simple road segmentation requires less than a multi class feature extraction), and the variety of the input both in terms of sensor type and spatial location. Once a training dataset has been created, the developer can begin training the model. Deep Learning models such as CNNs require a huge amount of computational time to train and normally requires specialised equipment, however once trained the model can be run on commodity hardware. At GI we have dedicated hardware for training, that requires specialised power and cooling systems, and it can still take weeks to fully train a model. Once trained, the model can be evaluated and tested. Depending on the performance of the model, it might require additional training or additional training data. For example, if there is a type of feature the model has difficulty identifying, more training examples might need to be added.

The model can be deployed once it has met the required performance metrics, either for analysts to use directly, or as part of an automated data processing workflow. A benefit of CNNs is that although they are slow and computationally intensive to train, they are reusable and efficient to run once the background work has been completed. Putting the model into production and the process of running the model on live or fresh data is called model inference. Once in production, a model can be reused indefinitely provided the problem definition doesn’t change, or it can be retrained to match slight changes in the problem space. Inference is computationally cheap, in that many CNN models that might have taken weeks to train, can now be run on laptops or even mobile phones. In the case of remote sensing, models that have taken days to train on specialised hardware, might take only minutes to produce results on new imagery data. This characteristic of CNNs makes them very useful for repeat tasks, or tasks that involve very large volumes of data.

Weeks of defining models, creating training data and training a model may sound daunting, however at GI we use a range of techniques to reduce the time required. For example, by using a range of domain experts we are able to streamline the process and maintain a catalogue of training data that may be useful for other projects. We also use a range of techniques specific to CNNs such as transfer learning and synthetic training data to reduce model development time and improve performance.

AI in action

To illustrate the theoretical concepts discussed so far, let’s review one of our more recent projects.

GI was approached by the Western Australian Government to provide an innovative Artificial Intelligence (AI) spatial solution for Metropolitan Perth and the Kimberly. The primary aim of these projects was to determine if it was possible to automatically extract complex features from earth observation imagery using AI.

For the Perth project, GI was provided with 500 square kilometres of high-resolution 4 band, 16-bit aerial imagery over the Perth Metropolitan region. The desired output was vector data describing building footprints, roads, railways, water courses, swimming pools, tree canopies and other infrastructure components extracted from the imagery.

From the provided information and input data, our team could define the problem; the key first step in AI development. Simply put, the problem space was feature extraction using high-resolution multispectral imagery over a broad area, with many different feature types to be identified and extracted.

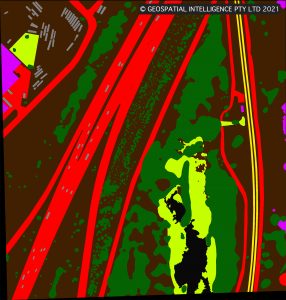

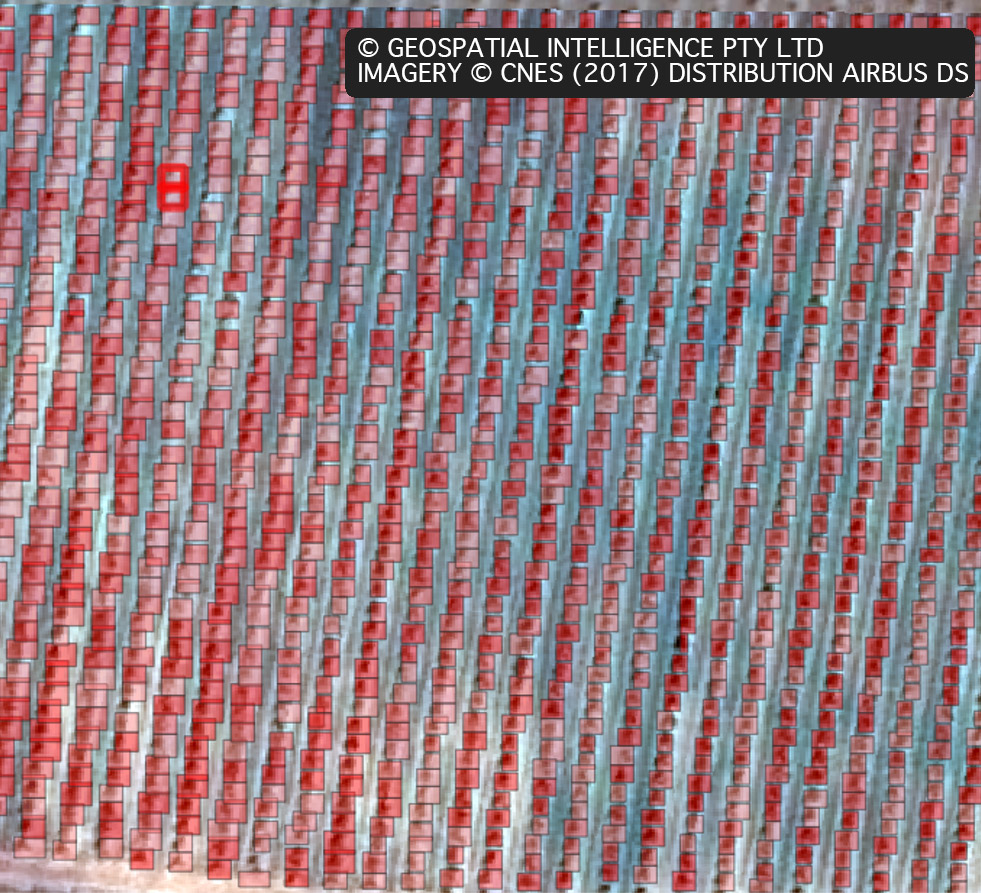

The next step in our AI development process was to define areas of training data. The team chose five initial locations from across the imagery, with the selection designed to provide examples of a variety of locations and features that the project area contained. The team then commenced the manual process of creating the training data masks. The mask is a greyscale image with each pixel representing the class the user wants the AI to assign to it. Figure 2 depicts an example of a mask appropriately coloured for ease of viewing.

Once the initial five training masks had been created (using several techniques but ultimately requiring a painstaking manual quality assurance process), the team could start model training. We selected a CNN architecture that contains slightly more than 34 million variables. This type of model is well-suited to learning the complex features expected in a project of this kind.

After an initial training period of 48 hours, the team could then perform an initial model evaluation. In this case, the team decided that the model was struggling with some feature types, in particular railway lines and bridges, so additional training data was selected to provide more examples of those features. A further 72 hours of training brought the model accuracy into line with the team’s objective.

Once the model was trained, tested, and evaluated to be ready for production, the team commenced the task of broad-scale data processing. The entire 500 square kilometres of imagery (roughly 0.5TB of data) was pipelined for an overnight inference process using the developed model. Once the inference was complete, the team were able to perform quality assurance and convert the output data into the final format required by the client.

The final output was over a million features, and the benefit of using GI’s AI system was that the entire extraction and delivery process could be done in less than 24 hours.

Other examples

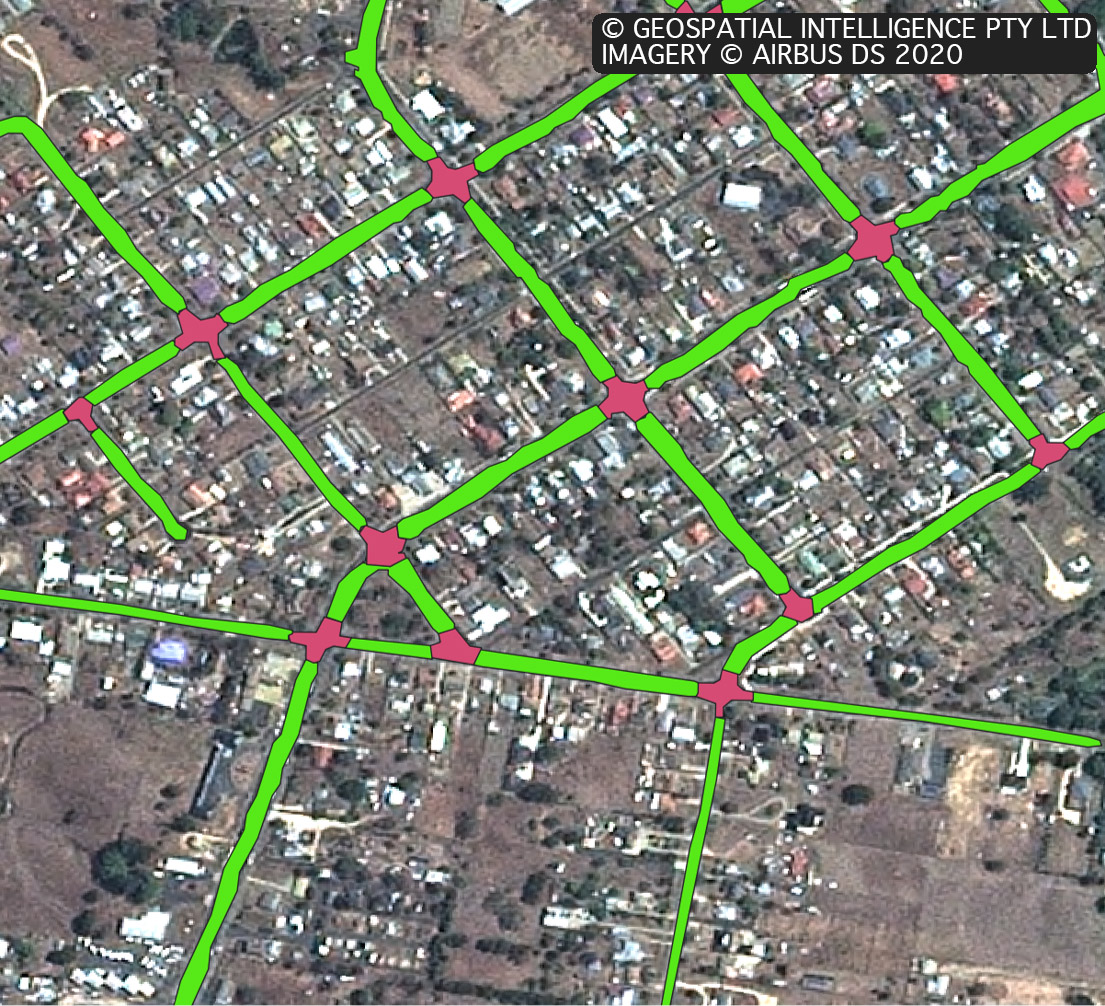

Feature extraction using aerial imagery isn’t the only thing the team here at GI has been working on. Below are a few choice other relevant examples.

What’s next?

Beyond electro-optical

Over 800 earth observation satellites are currently in orbit, with sensor payloads ranging from optical multispectral cameras to Hyperspectral and Synthetic Aperture Radar (SAR).

The number of earth observation satellites has been growing rapidly, making continuous global data collection possible and bringing about a New Space Paradigm; creating a situation where the availability and quantity of data from satellites makes the efficient extraction of information from the data more important than ever before. Under the New Space Paradigm, data capture can be scheduled across multiple constellations and sensor types. Data can be collected multiple times an hour if required, and data can be delivered by a global network of receiving stations within minutes of capture.

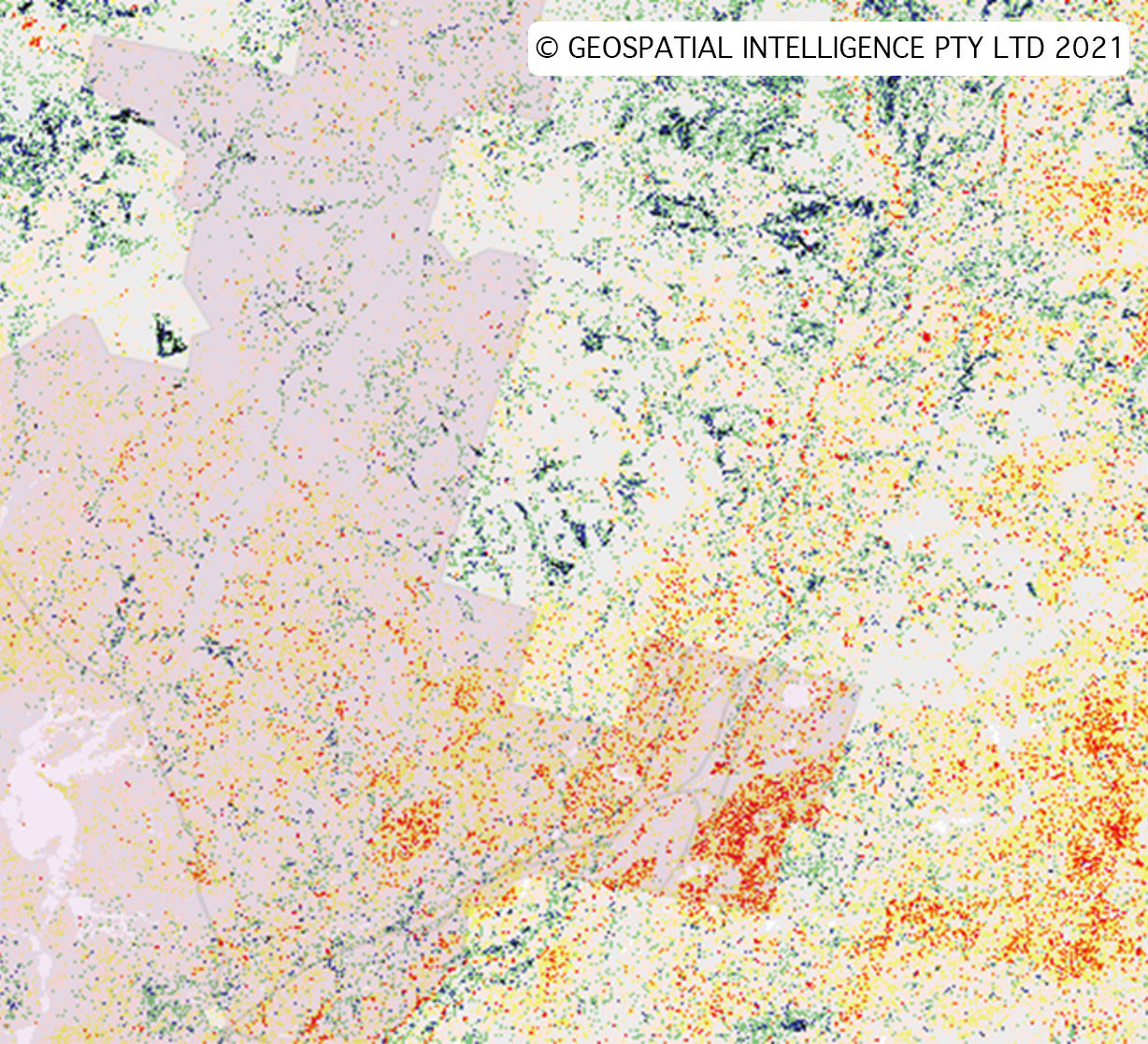

The nature of the New Space Paradigm will require remote sensing professionals to take advantage of existing and emerging technology to make sense of the large volumes of available data. AI can help analysts take advantage of otherwise complex data such as high-resolution SAR and Hyperspectral imagery, time stacks and fused multi-sensor data.

Synthetic Aperture Radar (SAR)

A notable trend within the New Space Paradigm is the availability of very high-resolution SAR imagery. Traditionally the domain of governments and defence forces around the world, companies like Capella Space and ICEYE are launching constellations of new generation SAR satellites.

This accessibility to commercial very high-resolution SAR imagery is beneficial to analysists for several reasons. SAR sensors are ‘active’ sensors and are therefore not reliant on daylight and are almost impervious to weather conditions. SAR data can be particularly useful in feature extraction, maritime domain awareness, elevation model creation and natural disaster management. The disadvantage is that it can be more difficult to interpret visually. AI models are proving to be a vital tool for analysts, providing an efficient way to rapidly extract useful information from SAR.

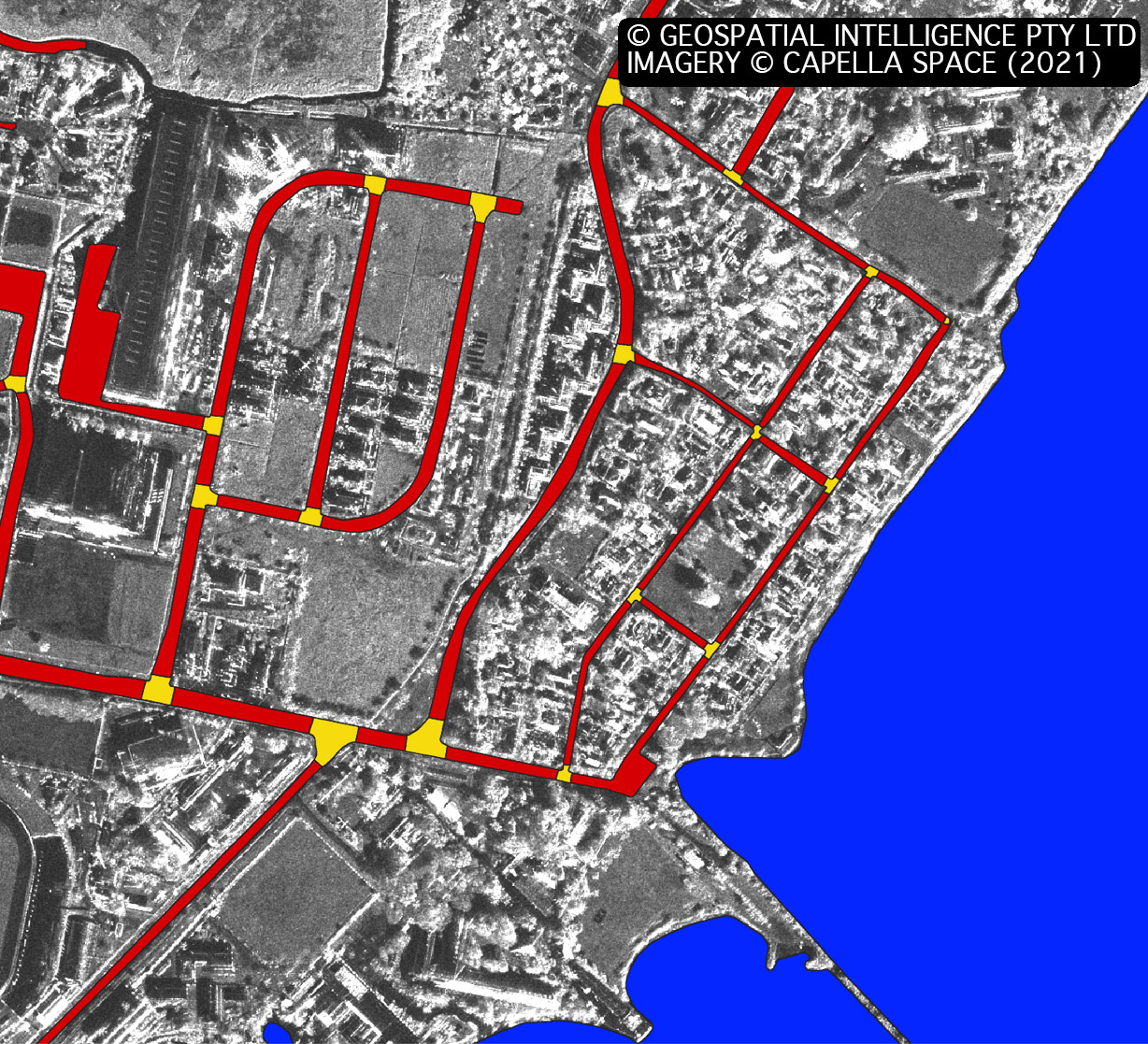

To demonstrate the effectiveness of SAR data, our team used GI’s AI systems to quickly train a model to extract roads, intersections, and water from Capella Space SAR imagery. After several days of training, the AI model was able to complete the entire extraction and vectorization process in less than 10 minutes for an area of 25 square kilometers. Figure 9 shows the vector output of the model overlayed on the original imagery.

While this is an example, the possibilities are endless for this type of capability. The ability to collect this kind of information multiple times a day through cloud makes it especially useful for time critical situations such as disaster relief and the National Security sector.